Trust score architecture: the framework most DevEx teams are missing in agentic development

When does an agent earn the right to act without a human?

I've sat in enough DevEx reviews to know the pattern. Someone pulls up the DORA dashboard. Someone else mentions SPACE. A third person asks about DPE scores. Everyone nods. Nobody asks the one question that actually matters: are we removing friction from the developer's day, or are we just measuring it more precisely? Frameworks are not a strategy. Autonomy is the goal. Everything else is scaffolding.

Dimension 1 “Operational metrics” : Is the platform actually moving?

Dimension 2 “Technical metrics” : Is the code actually good?

Dimension 3 “Trust & quality metrics” : Are developers actually trusting the agents and trusting them appropriately?

Dimension 4 “Business metrics“ : Does it actually matter to the org?

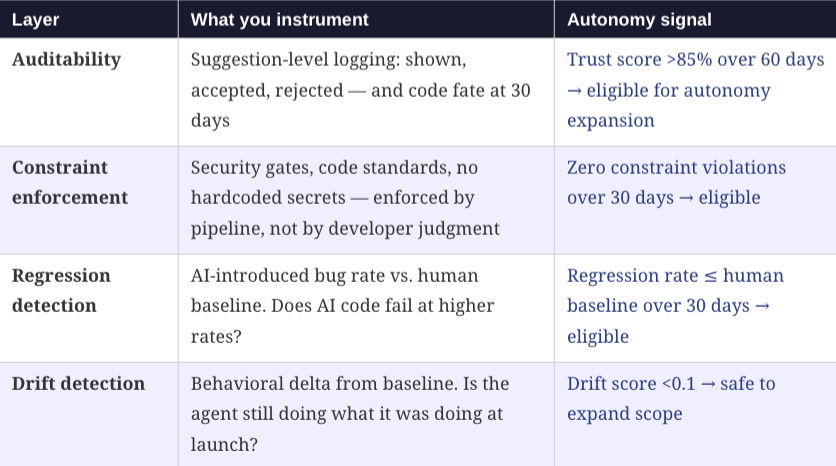

The four dimensions above tell you what to measure. The trust score architecture tells you what to do with the measurements specifically, how to use them to make the most consequential decision in agentic development: when does an agent earn the right to more autonomy?

Here is the architecture I use. It has three enforcement layers and a clear expansion criteria. All three layers must be green before you expand agent scope.

The expansion criteria in practice look like this: trust score above 85% over 60 days, zero constraint violations over 30 days, regression rate at or below human baseline over 30 days. When all three conditions hold simultaneously, the agent has earned a scope expansion. Not before.

This is not bureaucracy. This is the mechanism that makes agentic development safe enough to scale. Every team I’ve seen try to skip this granting autonomy based on demo performance rather than production track record has eventually faced an incident that walked the autonomy back further than where they started.

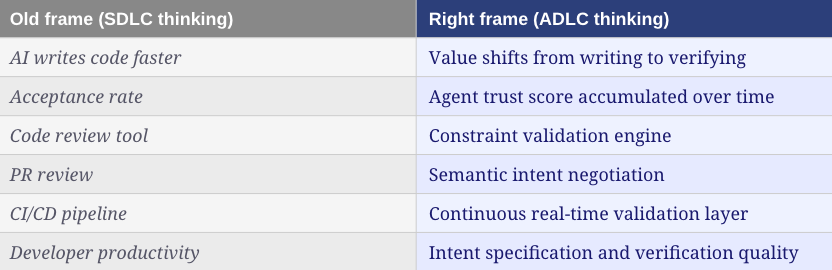

The vocabulary shift that matters

How you talk about agentic maintenance signals whether you’re still in SDLC thinking. Here is the shift worth making:

These are not just semantic differences. They reflect a genuine shift in what the maintenance phase is for. In the SDLC, maintenance preserves what you built. In the ADLC, monitoring and feedback continuously earns the right to expand what your agents can do. That is a fundamentally different mission and it requires a fundamentally different vocabulary.

Monday Morning Checklist:

Audit your current maintenance posture: reactive (SDLC) or continuous (ADLC)?

Identify the last time developer satisfaction was measured and when the next one is.

Pick one agent workflow in production and define what drift means for that workflow. If you don’t have a drift score, build one.

Review your agent autonomy grants: which ones were given at launch vs. earned through track record?

Build or review your deprecation list. Kill one capability this quarter that nobody is using.

Define ‘healthy’ for your platform in writing using the four-dimension framework. If your definition is gameable, rewrite it.

Schedule a Friday conversation with 2–3 engineers you haven’t talked to in a month. Not a survey. A conversation.